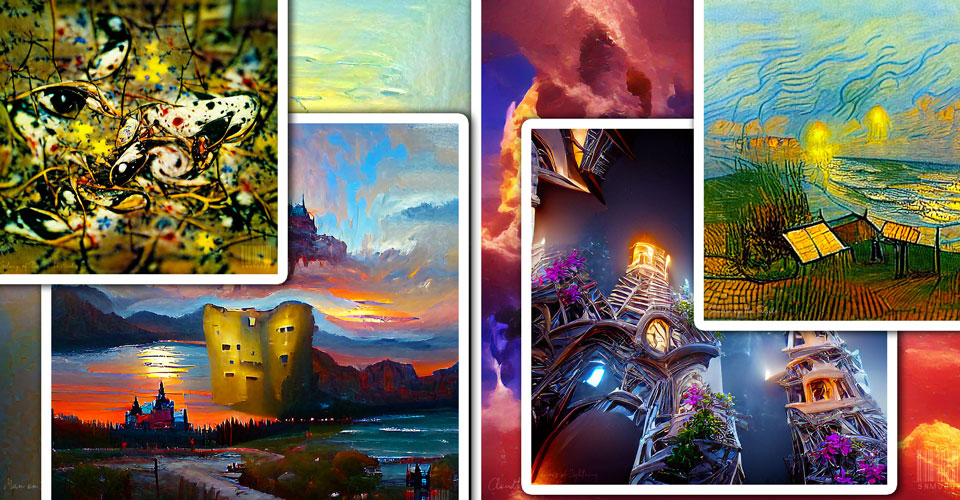

Art generated through VQGAN+CLIP & Diffusion+CLIP Python scripts on Google Colab. Enter your text and keywords – computer then collates a progression of images refining with every iteration (theoretically one could go on forever). The collation is based on a “training set” of images – basically images with text description – that attempt to replicate your text input. The more precise your keywords (you can add a painter whose style you wish to replicate or a gaming engine – I used “Unreal engine” as a keyword for some of those), the more accurate the script will be in generating what your input text is asking for. In theory, the sky’s the limit – as well as your graphics card and what resources you’ll be allocated through Google Colab (the paid-for version offers more stability, RAM, GPU power, speed and space). Every image is truly unique!

The difference between VQGAN and Diffusion is mainly how they handle the process of generating images. VQGAN concentrates on producing and refining one image and, if allowed, can take an infinite amount of time to do so. Diffusion, on the other hand, can produce up to 5 completely different images from the input text, but the results may not be as refined as what VQGAN can generate because the script has self-imposed time limits. Both work extremely well, depending on what you’re aiming for.

One caveat for both: Do not expect realism! Everything looks somewhat psychedelically impressionistic – spectacular nonetheless.

Added 2 videos in the VQGAN+CLIP section below to show the progression in image generation the VQGAN+CLIP scripts go through. The script produces roughly 12 images (frames) per second. It’s mindblowing what AI can do. I might as well give up trying to be a better artist when a computer can very easily beat us at our own game, eventhough it doesn’t really have any understanding and intention behind what it’s creating! Astounding and somewhat disconcerting! Skynet is indeed here… so we might as well enjoy it.

VQGAN+CLIP AI

Lightning Towers

03/01/2022

Man on the Edge of a Precipice

03/01/2022

Tower of Illusions

03/01/2022

Sunset at the Castle of Solitude

03/01/2022

Traffic Jam on a Rainy Night in Paris

03/01/2022

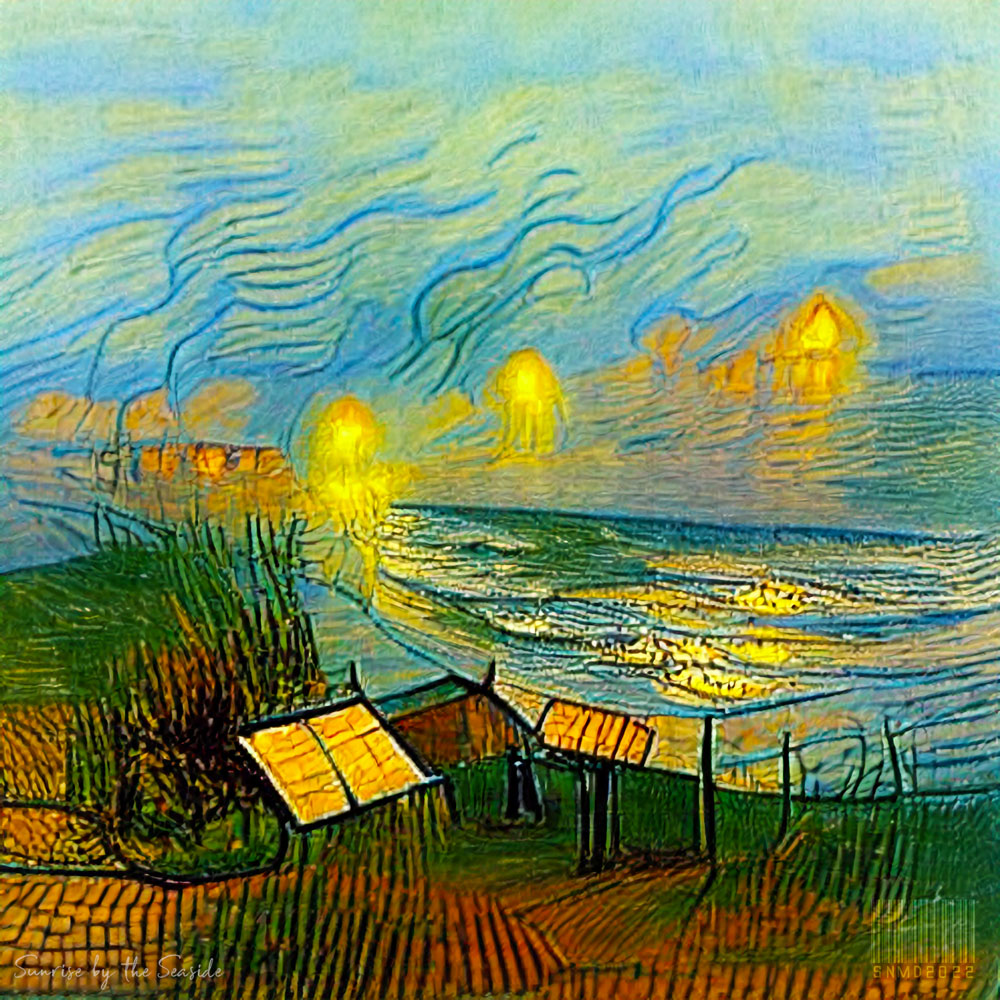

Sunrise by the Seaside – after Vincent Van Gogh

04/01/2022

Galaxy Of Stars in the Mind’s Eye – after Jackson Pollock

05/01/2022

Colours in the Mind’s Eye

05/01/2022

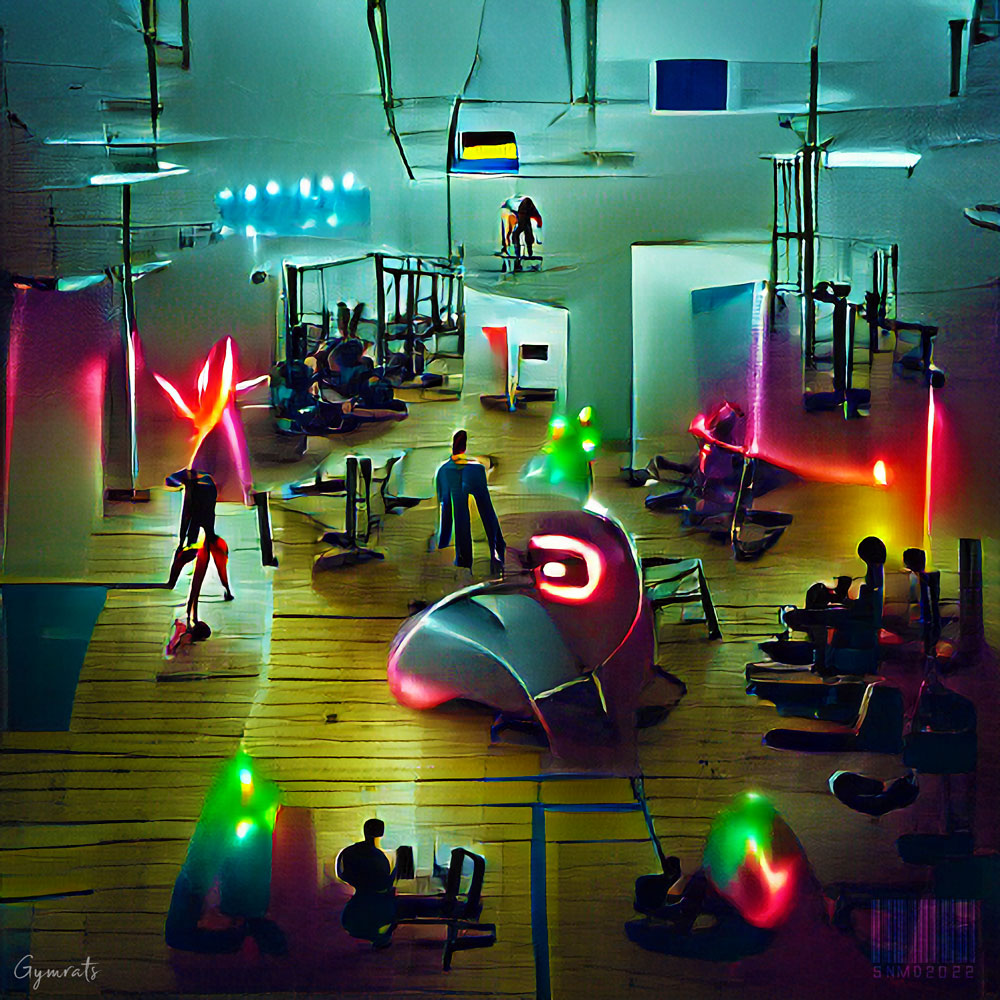

Gymrats

05/01/2022

Cloudburst

05/01/2022

Standing Among Clouds

05/01/2022

Manhattan Skyline on a Starry Night

06/01/2022

People Dancing Among the Stars – after Keith Haring

06/01/2022

Looking through a Mirror in the Sky

06/01/2022

Cyberpunk Winter Forest

07/01/2022

Baby Robots in a Lab Playground

07/01/2022

Winter Mountains Against Bright Blue Skies

07/01/2022

Visit Greg’s Artstation here

DIFFUSION+CLIP AI

Cyberpunk Wizard I

07/01/2022

Cyberpunk Wizard II

07/01/2022

Cyberpunk Wizard III

07/01/2022

Cyberpunk Wizard IV

07/01/2022

Cyberpunk Wizard V

07/01/2022

The Fire of a Hopeful Mind I

07/01/2022

The Fire of a Hopeful Mind II

07/01/2022

The Fire of a Hopeful Mind III

07/01/2022

Fearbox Cityscape I

07/01/2022

Fearbox Cityscape II

07/01/2022

Fearbox Cityscape III

07/01/2022